web登录失败时 /var/log/app.log 的日志信息:

~ # cat /var/log/app.log | grep -i “login|auth|iam”

1970-01-01 00:01:42.942657 iam NOTICE: micro_component.lua(167): Startup status has changed, ==> Starting, uptime:103s, cost 0ms

1970-01-01 00:01:47.706367 iam NOTICE: persist_client_lib.lua(60): persist client init completed, time taken: 3960 ms

1970-01-01 00:01:53.334308 iam WARNING: client_app_base.lua(33): ping bmc.kepler.persistence /bmc/kepler/persistence failed 1 time, err: org.freedesktop.DBus.Error.NoReply: Did not receive a reply. Possible causes include:the remote application did not send a reply, the messagebus security policy blocked the reply, the reply timeout expired,or the network connection was broken., retrying…

1970-01-01 00:01:58.837954 iam WARNING: client_app_base.lua(33): ping bmc.kepler.persistence /bmc/kepler/persistence failed 2 times, err: org.freedesktop.DBus.Error.NoReply: Did not receive a reply. Possible causes include:the remote application did not send a reply, the messagebus security policy blocked the reply, the reply timeout expired,or the network connection was broken., retrying…

1970-01-01 00:02:04.345171 iam WARNING: client_app_base.lua(33): ping bmc.kepler.persistence /bmc/kepler/persistence failed 3 times, err: org.freedesktop.DBus.Error.NoReply: Did not receive a reply. Possible causes include:the remote application did not send a reply, the messagebus security policy blocked the reply, the reply timeout expired,or the network connection was broken., retrying…

1970-01-01 00:02:15.160812 iam NOTICE: persist_client_lib.lua(60): persist client init completed, time taken: 26850 ms

1970-01-01 00:02:15.179387 iam NOTICE: iam_app.lua(143): iam app init start

1970-01-01 00:02:15.239092 iam NOTICE: service_app_base.lua(368): start iam service

1970-01-01 00:02:18.747835 iam NOTICE: mc_admin.lua(86): no service json info

1970-01-01 00:02:29.369217 iam ERROR: C: [WSEC_CBB][208] (UTC) 1970-01-01 00:02:29 KMC is running, not initialize repeatedly before finalized.

1970-01-01 00:02:32.659350 iam NOTICE: key_client_lib.lua(188): start listen on change of keyid from path: /bmc/kepler/KeyService/Kmc/Keys/10

1970-01-01 00:02:32.662682 iam NOTICE: key_client_lib.lua(107): Start Refresh MK Mask.

1970-01-01 00:02:32.754779 iam NOTICE: iam_app.lua(297): iam class init start

1970-01-01 00:02:37.246561 iam NOTICE: iam_app.lua(303): iam class init completed!

1970-01-01 00:02:37.341267 iam NOTICE: iam_app.lua(204): iam app init completed!

1970-01-01 00:02:37.362385 iam NOTICE: micro_component.lua(167): Startup status has changed, Starting ==> InitCompleted, uptime:158s, cost 54440ms

1970-01-01 00:02:47.004635 fructrl NOTICE: pwr_mutation.lua(79): [System:1]map event type = FruInsertionCriteriaMet

1970-01-01 00:02:47.023193 fructrl NOTICE: hotswap_state.lua(89): [System:1]Current event is FruInsertionCriteriaMet, state is M1, uptime: 168s.

1970-01-01 00:02:55.583628 iam NOTICE: object_manage.lua(678): start to fetch hwdiscovery objects

1970-01-01 00:02:56.192029 iam NOTICE: object_manage.lua(667): delay start, delay: 70ms

1970-01-01 00:02:56.202584 iam NOTICE: object_manage.lua(716): fetch hwdiscovery objects completely, took 0 ms, uptime: 177 s

1970-01-01 00:03:00.775690 iam ERROR: app_preloader.lua(232): …alib/account/interface/mdb/account_service_cache_mdb.lua:57: app(iam/service/main) count(1) pcall failed(./opt/bmc/libmc/lualib/mc/mdb/init.lua:848: try get object failed)

1970-01-01 00:03:04.972415 iam WARNING: init.lua(1129): Requestor Skynet message queue scheduling delay is 3645 ms (threshold: 500 ms), service_name=:1.80, path=/bmc/kepler/MdbService, interface=bmc.kepler.Mdb, method_name=GetObject

1970-01-01 00:03:17.252008 iam WARNING: init.lua(1129): Requestor Skynet message queue scheduling delay is 1276 ms (threshold: 500 ms), service_name=:1.80, path=/bmc/kepler/MdbService, interface=bmc.kepler.Mdb, method_name=GetObject

1970-01-01 00:03:18.939384 iam ERROR: app_preloader.lua(232): …alib/account/interface/mdb/account_service_cache_mdb.lua:57: app(iam/service/main) count(2) pcall failed(./opt/bmc/libmc/lualib/mc/mdb/init.lua:848: try get object failed)

1970-01-01 00:03:24.454179 account NOTICE: snmp_patch.lua(23): Because the authentication protocol of User 2 does not match then authentication key,the authentication protocol has been corrected.

1970-01-01 00:03:38.834463 iam ERROR: app_preloader.lua(232): …alib/account/interface/mdb/account_service_cache_mdb.lua:57: app(iam/service/main) count(3) pcall failed(./opt/bmc/libmc/lualib/mc/mdb/init.lua:848: try get object failed)

1970-01-01 00:03:43.274688 iam WARNING: init.lua(1129): Requestor Skynet message queue scheduling delay is 1542 ms (threshold: 500 ms), service_name=:1.80, path=/bmc/kepler/MdbService, interface=bmc.kepler.Mdb, method_name=GetObject

1970-01-01 00:03:51.180322 account NOTICE: account_app.lua(225): infrastructure init end, login rule init start

1970-01-01 00:03:52.312743 account NOTICE: account_app.lua(231): login rule init end, role privilege init start

1970-01-01 00:03:52.323177 iam WARNING: init.lua(1129): Requestor Skynet message queue scheduling delay is 765 ms (threshold: 500 ms), service_name=:1.80, path=/bmc/kepler/MdbService, interface=bmc.kepler.Mdb, method_name=GetObject

1970-01-01 00:04:02.218848 iam WARNING: init.lua(1129): Requestor Skynet message queue scheduling delay is 6386 ms (threshold: 500 ms), service_name=:1.80, path=/bmc/kepler/MdbService, interface=bmc.kepler.Mdb, method_name=GetObject

1970-01-01 00:04:06.968826 iam ERROR: app_preloader.lua(232): …alib/account/interface/mdb/account_service_cache_mdb.lua:57: app(iam/service/main) count(4) pcall failed(./opt/bmc/libmc/lualib/mc/mdb/init.lua:848: try get object failed)

1970-01-01 00:04:10.052302 nsm NOTICE: https_inter_chassis.lua(174): [nginx] Inter chassis auth enabled property changed, INTER_CHASSIS service enabled: false

1970-01-01 00:04:16.226987 iam WARNING: init.lua(1129): Requestor Skynet message queue scheduling delay is 3809 ms (threshold: 500 ms), service_name=:1.80, path=/bmc/kepler/MdbService, interface=bmc.kepler.Mdb, method_name=GetObject

1970-01-01 00:04:33.343034 iam NOTICE: account_cache_mdb.lua(79): receive add account signal, account23 added

1970-01-01 00:04:36.465104 iam NOTICE: account_cache_mdb.lua(79): receive add account signal, account20 added

1970-01-01 00:04:37.048010 iam NOTICE: account_cache_mdb.lua(79): receive add account signal, account19 added

1970-01-01 00:04:37.639591 iam NOTICE: account_cache_mdb.lua(79): receive add account signal, account2 added

1970-01-01 00:04:38.014052 iam NOTICE: account_cache_mdb.lua(79): receive add account signal, account18 added

2023-08-15 09:20:46.321820 iam NOTICE: account_cache_mdb.lua(79): receive add account signal, account22 added

2023-08-15 09:22:40.380872 iam NOTICE: key_client_lib.lua(181): Domain 10 update key_id to 2

2023-08-15 09:22:50.066665 iam NOTICE: start_profiling.lua(199): profiling finished, start time:1970-01-01 00:01:42, duration:5 min, sent signals:72, received signals:174, sent rpcs:43, received rpcs:1

2023-08-15 09:22:57.666604 iam ERROR: session_service.lua(86): get multi host manager_id(1) object failed

2023-08-15 09:22:57.671089 iam ERROR: session_service.lua(95): The retrieved object is null

2023-08-15 09:22:57.678824 iam ERROR: init.lua(97): session_mdb.lua:36 > session_mdb.lua:52 > session_service.lua:96: The request failed due to an internal service error. The service is still operational.

2023-08-15 09:22:57.693442 iam ERROR: session_service.lua(284): update cli online session error InternalError

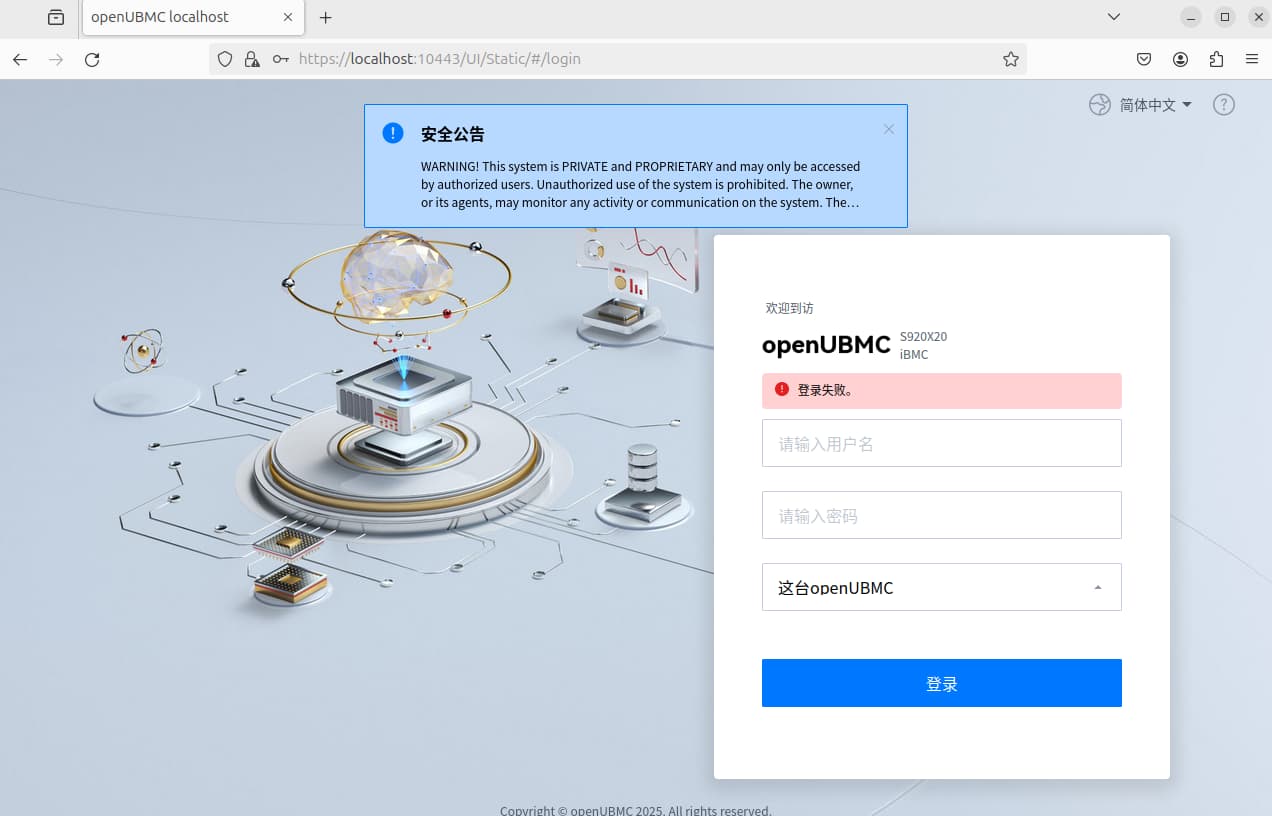

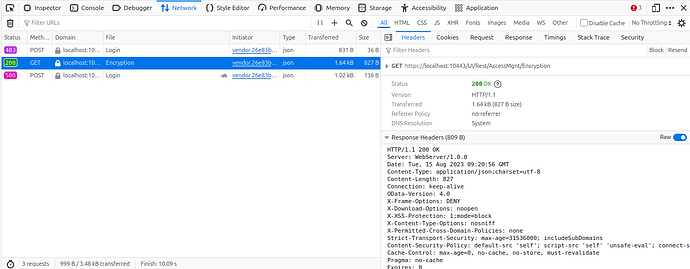

2023-08-15 09:21:00.151883 web_backend NOTICE: route_mapper.lua(388): uri:/UI/Rest/Login, method:get timeout, t1=1692091256856, t2=1692091260137, time=3281

2023-08-15 09:21:05.485840 iam ERROR: session_service.lua(86): get multi host manager_id(1) object failed

2023-08-15 09:21:05.489068 iam ERROR: session_service.lua(95): The retrieved object is null

2023-08-15 09:21:05.495007 iam ERROR: init.lua(97): session_mdb.lua:36 > session_mdb.lua:52 > session_service.lua:96: The request failed due to an internal service error. The service is still operational.

2023-08-15 09:21:05.498627 iam ERROR: operation_logger.lua(620): NewSession: InternalError InternalError: The request failed due to an internal service error. The service is still operational.

2023-08-15 09:21:05.672047 web_backend NOTICE: route_mapper.lua(388): uri:/UI/Rest/Login, method:post timeout, t1=1692091258882, t2=1692091265662, time=6780